Prompt Types

Prompt engineering uses different prompting techniques to guide AI models in generating better responses. Below are the most common prompt types used when interacting with AI systems.

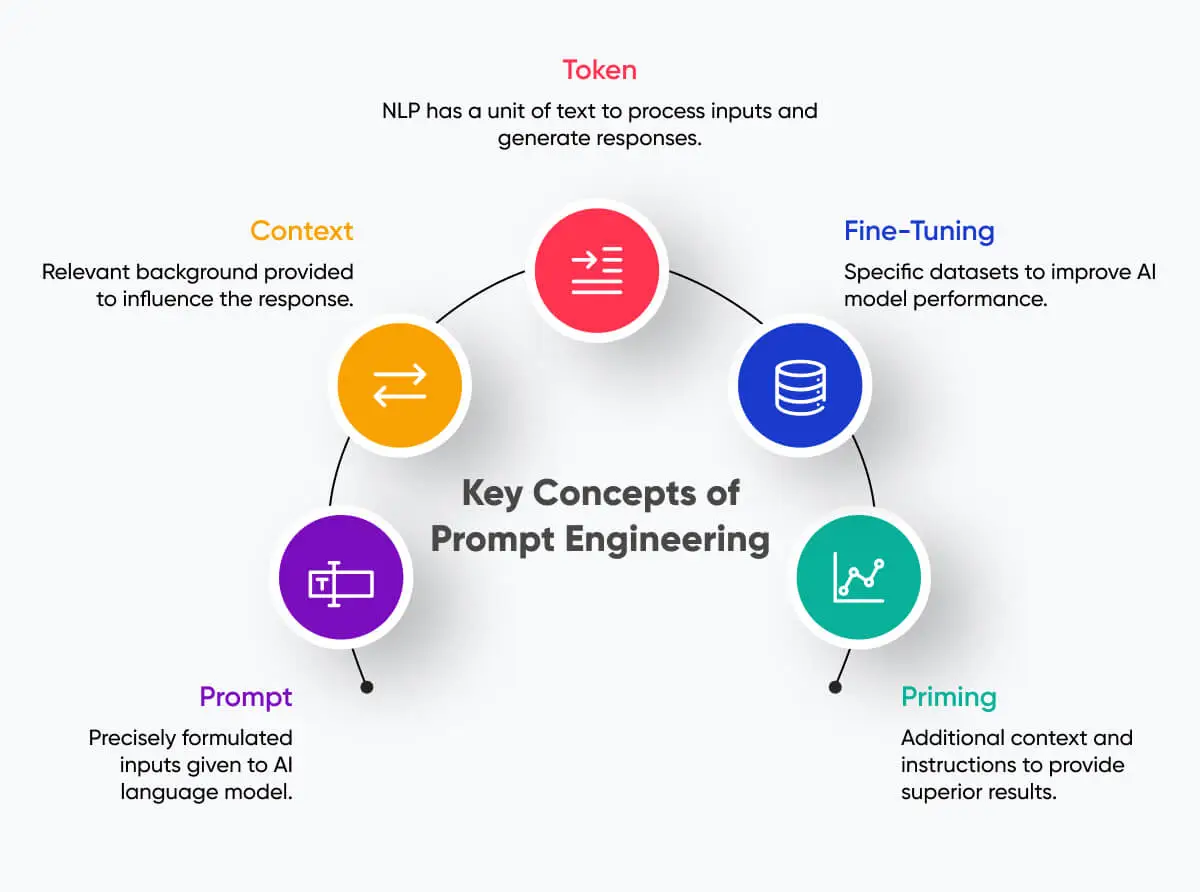

Key Concepts of Prompt Engineering

Token

A token is a small unit of text that an AI model processes when generating responses. Tokens can represent words, characters, or parts of words depending on the model.

Context

Context refers to the background information provided in a prompt that helps the AI model understand the task more accurately and produce better responses.

Fine-Tuning

Fine-tuning improves an AI model’s performance by training it with additional datasets tailored to specific tasks or domains.

Priming

Priming involves giving additional instructions or examples before the main prompt so that the AI model produces more relevant and accurate results.

Prompt

A prompt is the input or instruction given to an AI model to generate a response. Well-structured prompts help guide the AI toward better and more useful outputs.

Zero-Shot Prompt

Zero-shot prompting asks an AI model to perform a task without providing examples. The model relies on its pre-trained knowledge to generate a response.

Few-Shot Prompt

Few-shot prompting provides a few examples before asking the AI to complete a task. This helps the model understand the expected pattern or format.

Chain-of-Thought Prompt

Chain-of-thought prompting encourages the AI to explain its reasoning step by step. This technique improves accuracy for complex reasoning and problem solving tasks.

Instruction Prompt

Instruction prompting gives clear directions to the AI model about what task to perform and how the output should be structured so that the model can generate accurate and meaningful results.